Contents

departments

- EDITOR’S NOTE

- When History Informs the Future

- President’s Message

- Let Your Voice Be Heard!

- Member News

- Comings and Goings

- Calendar of Events

- CAS Staff Spotlight

- CAS and Peking University Sponsor 14th Annual Actuarial Month

- CAS Announces Winners of the 2025 Peak Re-Sponsored ARECA Case Competition

- Every CAS Member Has a Signature. What’s Yours?

- Professional Insight

- Developing News

- CAS Hosts RPM Fireside Chat with Jeffrey Ma on Unlocking Innovation

- Insurtech Is Dead. Long Live Insurtech

- Leveraging Actuarial Guardianship for AI Governance

- Professionalism Considerations for Snowmageddon

- Global Actuarial Pricing and the Regulatory Evolution

- Bringing Innovation to Pricing for Changing Vehicle Features and Volatile Values at Risk

- Operationalizing Canada’s Federal Guideline OSFI E-23 – Model Risk Management to Deliver Fair Consumer Outcomes

- Professionalism Briefs

- Actuarial Expertise

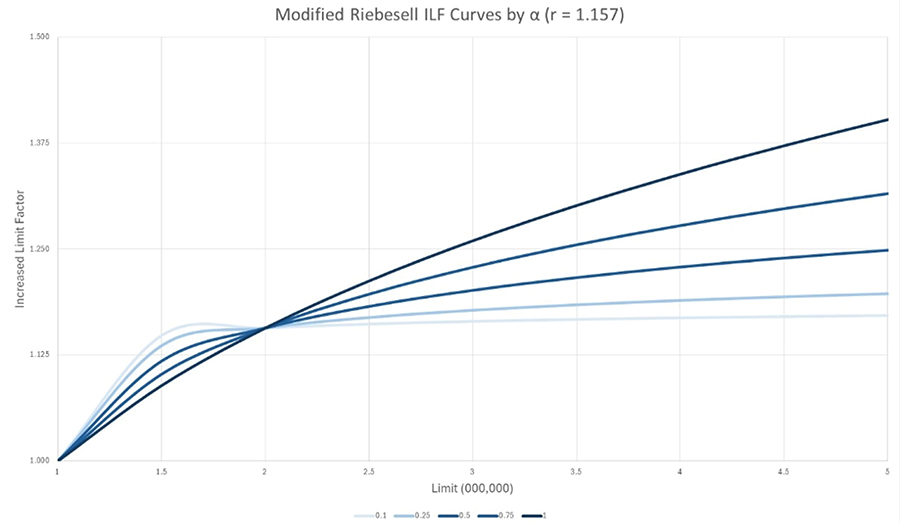

- Increased Limit Factors: A Modified Riebesell Form

- Solve This

- It’s a Puzzlement

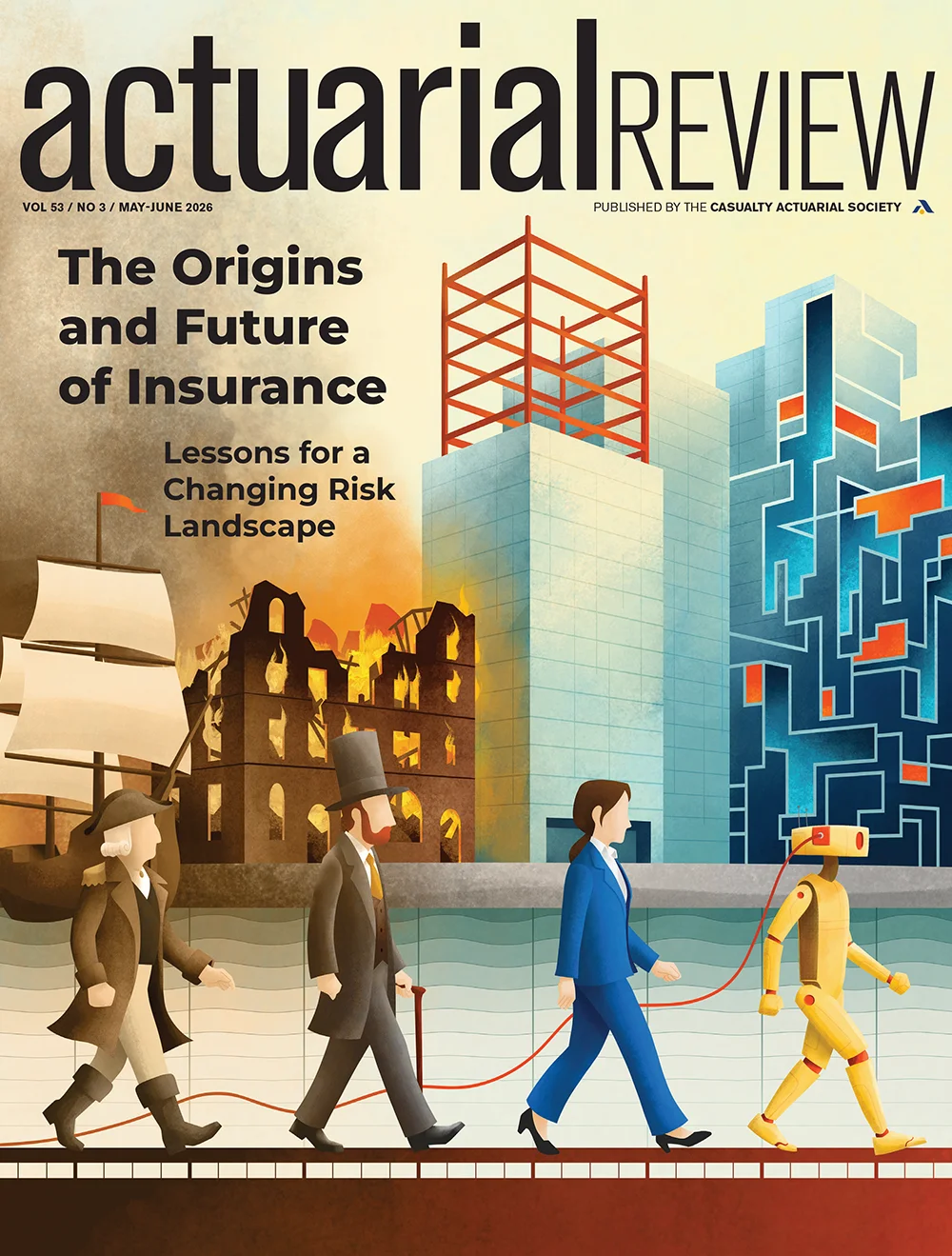

on the cover

-

Explore how insurance evolved from ancient risk-sharing practices into a cornerstone of modern economies and how its history can guide actuaries in addressing emerging risks.

Explore how insurance evolved from ancient risk-sharing practices into a cornerstone of modern economies and how its history can guide actuaries in addressing emerging risks. -

AI agents are rapidly transforming business operations while introducing new and difficult-to-price liability risks across cyber, E&O, and general liability lines.

-

An interview with Scott Shambaugh on his experience being targeted by an AI agent—and the broader risks AI poses for open-source communities and actuarial work.

The amount of dues applied toward each subscription of Actuarial Review is $10. Subscriptions to nonmembers are $50 per year. Postmaster: Send address changes to Actuarial Review, 4350 North Fairfax Drive, Suite 250, Arlington, Virginia 22203.

Masthead

-

Editor in Chief

Jim Weiss

-

CAS Director of Publications and Research

Elizabeth A. Smith

-

AR Managing Editor and CAS Editorial/Production Manager

Sarah Sapp

-

CAS Managing Editor/Contributor

Greg Guthrie

-

CAS Graphic Designer

Sonja Uyenco

-

CAS Cross-Functional Coordinator/Contributor

Delilah Barrow

-

News Editor

Sara Chen

-

Opinions Editor

Richard B. Moncher

-

Editors

- Colleen Arbogast

- Daryl Atkinson

- Karen Ayres

- Glenn Balling

- Robert Blanco*

- Lisa Brown

- Michael Budzisz

- Sumanth Chebrolu

- Todd Dashoff

- Daniel Jay Falkson*

- Stephanie Groharing

- Julie Hagerstrand

- Srinand N. Hegde*

- Cameron Herrmann*

- Kenneth S. Hsu

- Cindy Hu*

- Jack Huang*

- Rachel Hunter*

- Rob Kahn*

- Benyamin Kosofsky

- Julie Lederer

- Albert Lee

- David Levy

- James Li*

- Sydney McIndoo

- Stuart Montgomery

- Sandra Maria Nawar*

- Erin Olson

- Shama S. Sabade

- Michael Schenk

- Robert Share

- Craig Sloss

- Jared Smollik

- Andrew Somers*

- Bella Thiel*

- Isaac Wash*

- Radost Wenman

- Ian Winograd

- Vanessa Wu*

- Xuan You*

- Yuhan Zhao*

-

*Writing Staff

-

Puzzle

Jon Evans

-

Advertising

Al Rickard, 703-402-9713

arickard@assocvision.com -

The Casualty Actuarial Society is not responsible for statements or opinions expressed in the articles, discussions or letters printed in Actuarial Review. -

For permission to reprint material from Actuarial Review, please write to the editor in chief. Letters to the editor can be sent to AR@casact.org or the CAS Office. To opt out of the print subscription, send a request to AR@casact.org.

Images: Getty Images -

© 2026 Casualty Actuarial Society.

ar.casact.org

When History Informs the Future

nsurance is often viewed through a modern lens, but its roots stretch back thousands of years. Our cover story revisits that history, exploring how early innovations in risk sharing laid the groundwork for today’s global insurance systems and how those same principles continue to inform the future of the profession. That forward-looking lens is especially relevant in this issue, where we turn from history to the rapidly evolving risks of today. One article examines the emerging liability landscape of AI agents, highlighting how autonomous systems are already creating complex, difficult-to-underwrite exposures across cyber and professional lines. Alongside it, a firsthand interview with engineer Scott Shambaugh offers a human perspective on these same technologies, illustrating how agentic behavior can manifest in unexpected and sometimes harmful ways in real-world communities. Together, these pieces underscore a familiar theme: While the tools may change, the challenge of understanding, managing, and assigning risk remains at the heart of the actuarial profession.

We bring you five session recaps from Ratemaking, Product, and Modeling seminar, held March 16-18 in Chicago, including sessions on navigating risk with machine learning; professionalism in climate-driven catastrophe risk; leveraging insurtech now that the hype is over; comparing actuarial pricing across the globe; and generative and agentic AI, regulation, and the actuary. We also delve into the brand work the CAS has been doing, telling the story of the philosophy behind the endeavor. Learn about the evolution of the brand and see the new look firsthand.

We conclude with a technical contribution that reflects the profession’s continued evolution in practice. Revisiting a foundational tool in liability pricing, the authors introduce a modified Riebesell form for increased limit factors—offering a more flexible approach for modeling risks that are not as heavily tailed as traditional assumptions suggest. By refining a long-standing actuarial method, the article highlights how even well-established frameworks must adapt to better reflect real-world experience, reinforcing the ongoing balance between theory and application that defines actuarial work.

Enjoy the issue!

Actuarial Review

Casualty Actuarial Society

4350 North Fairfax Drive, Suite 250

Arlington, Virginia 22203 USA

Or email us at AR@casact.org

Let Your Voice Be Heard!

lection season is nearly upon us, and before we know it we’ll be sifting through candidate profiles and campaign messages, reading articles, talking to peers, and watching interviews and campaign videos to determine who deserves our vote. Which candidates have the experience and background to deal with the challenges we face? What issues are most important to me, and who can I trust to represent my interests? Who do I trust to make sound, principled decisions on issues that may arise in the future? Who shares my values?

Yes, elections are serious business and require us as voters to make informed choices — whether we’re talking about the U.S. midterm elections in November 2026 or the CAS elections in July 2026. I encourage eligible members to exercise their rights and responsibilities and vote in both important elections, but as CAS president, I want to focus on our upcoming CAS elections.

The CAS Board noted that participation in CAS elections has dropped in each of the past several years and recently undertook a survey of nonvoting, eligible members to better understand what may be driving this trend. When asked to identify the primary reason for not voting in the most recent CAS election, respondents identified several factors (see Table 1).

Table 1.

Forgetting to vote (14%) and lack of knowledge regarding the role of the CAS Board (7%) are areas where the CAS will take action as well.

When asked what the CAS could do differently to motivate eligible members to vote, respondents identified several potential changes (see Table 2).

Table 2.

- Make elections feel meaningful and competitive.

- Improve understanding of the Board’s role, impact, and track record.

- Enhance candidate visibility and engagement.

- Broaden representation and diversity of viewpoints.

- Recognize voting rights and inclusion concerns.

- Acknowledge that some nonvoting is unavoidable.

The CAS will be sending additional reminders this election cycle and will also look at ways to ensure members better understand the respective roles of the board, president, and president-elect. We will also be modifying some of the candidate information to address the need for more distinguishing information to assist members in their voting deliberations. The topic of competitive elections for president-elect has been discussed, and while there could be consideration given in the future, near-term efforts will focus on communication, better candidate information and engagement, as well as better information concerning the roles of the various parties.

On the topic of competitive elections, I think it is useful to remind members that while the Nominating Committee traditionally has identified only a single nominee for president-elect, there is a vehicle for additional candidates to nominate themselves through the preferential ballot process, an outcome that has occurred in past CAS elections. Historically, the time demands of the president’s role have often made it a challenge to identify a single candidate in some years, though with the enhanced capabilities of the CAS staff, the presidential role is somewhat less demanding than in years past, and more candidates might be willing to accept a nomination. The Board elections are already competitive, with eight nominees vying for four seats in recent elections. This has possibly contributed somewhat to the feeling of not having sufficient differentiating information; multiple candidates can have somewhat similar backgrounds and viewpoints on key issues, even as the Nominating Committee diligently works to identify a diverse and representative slate of candidates.

One word of caution regarding competitive elections: the notion of a competitive election can very well encourage some degree of politicization and polarization within the CAS community, which is something we have largely avoided for the past century and more of our existence. My personal view is that I would not want to see competitive elections implemented solely as a tool to increase voter participation, as the unintended consequences may well lead to bigger challenges than low voter turnout.

While the CAS Board and Executive Council implement improvements to the election process and communications in response to the survey results outlined above, I want to encourage eligible members to invest the time needed to be informed voters in the upcoming CAS elections and make your voices heard. This is our Society and we have both the privilege and responsibility to select leaders to ensure the continued growth and success of the CAS for current and future generations of actuaries.

Actuarial Review Letters Policy

Letters shall not contain personal attacks or statements directly or implicitly denigrating the characters of individuals or particular groups; false or unsubstantiated claims; or political rhetoric. Letters should be no more than 250 words and must include the author’s name and phone number or email address, so the editorial staff can confirm the author. Anonymous letters will not be published. There shall be no recurrence of topics; issues previously addressed will not be the subject of continued letters to the editor, unless new and pertinent information is provided. No more than one letter from an individual can appear in every other issue. Letters should address content covered in AR. Content regarding the CAS Board of Directors or individual departmental policies should be directed to the appropriate staff and volunteer groups (e.g., board, working groups, committees, task forces, or councils) instead of AR. No letter that attempts to use AR as a platform for an ulterior purpose will be published. Letters are subject to space limitations and are not guaranteed to be published. The AR editorial volunteer and staff team reserves the right to edit any submitted letter so that it conforms to this policy. Decisions to publish letters and make changes to submissions shall be made at the discretion of the AR Working Group and CAS staff.

For more information on AR editorial policies, visit here.

Comings and Goings

Lee Bowron, ACAS, MAAA, published “The Kerper-Bowron Method: A Foundational Change for Service Contract Claim Estimation and Accounting” in the journal Risks The paper concerns forecasting expected losses and cancellations for service contracts.

Wesley Griffiths, FCAS, was appointed executive fellow and program director for the risk management and insurance (RM&I) program at the University of St. Thomas. He will continue to serve as AVP & Senior Actuary at Travelers while assuming this role. In this role, Griffiths will oversee the undergraduate RM&I certificate and drive program growth through expanded academic offerings, experiential learning opportunities, and engagement with industry partners.

Scott Henck, FCAS, MAAA, CPCU, has been appointed senior vice president and chief actuary at Chubb Limited. In his new role, Henck will oversee all actuarial functions, including reserving, pricing, and capital performance measurement. Henck brings nearly three decades of insurance industry experience to the role. He joined Chubb in 2002 and most recently served as chief actuary of North America. Prior to that role, he founded and led the actuarial insights, business intelligence, and advanced analytics unit for global claims.

Calendar of Events

-

July 28–Sept 1, 2026

2026 CAS Virtual Workshop: Introduction to

Python for P&C Insurance -

September 14–16, 2026

2026 Casualty Loss Reserve Seminar

Las Vegas, NV -

November 8–11, 2026

2026 CAS Annual Meeting

Honolulu, HI

ctuaries have both tremendous power and a humbling responsibility in regards to insurance company solvency. By virtue of the rigorous education required for achieving credentials from the CAS, an actuary attains a unique stature in the insurance community. With that stature comes the professional responsibility to provide opinions pertinent to the solvency of state-regulated insurance companies.

We act neither as agents of the domiciliary regulator nor as advocates for the insurance entity when we render formal statements of actuarial opinion (SAO). Our responsibility is to provide an independent, unbiased opinion as to the reasonableness of the company’s held accrual for its unpaid loss and loss adjustment expense obligations.

Virtually every communication made by an actuary in a professional capacity is considered an SAO. However, formal, prescribed SAOs — ones required by statute, regulation or other legally binding authority — involve de facto certifications that held accruals are reasonable.

I have heard it said many times that, as actuaries, we do not “certify” reserves but rather render an opinion as to their reasonableness. However, consider that most prescribed SAOs involve at least three representations, including:

- Held reserves meet the requirements of the insurance laws of domicile;

- Held reserves are consistent with reserves computed in accordance with accepted loss reserving standards of practice promulgated by the Actuarial Standards Board (ASB); and,

- Held reserves make a reasonable provision in the aggregate for all unpaid loss and loss adjustment expense obligations of the Company under the terms of its contracts and agreements.

Collectively, these three representations entail a “certification” that the Company’s held reserves are reasonably stated.

Given that SAOs are generally public information, such documents are often an actuary’s most public facing communication. Our opinions are not only given considerable weight by auditors and regulators, but also impose an immense responsibility on us as professionals. To the extent a Company has solvency difficulties, it is certain the SAOs rendered in prior years will be subject to scrutiny.

Actuaries sometimes deliver very unwelcome news regarding reserve adequacy…or inadequacy. Often, the Company will adjust its booked amounts to be within the actuary’s range of reasonable reserve indications… but not always.

Over the course of my 40-plus years in the consulting business, I have been involved — directly or indirectly — with at least two dozen insolvencies. In most situations, the slide towards insolvency was gradual. In other cases, poor decisions by company management or departments (e.g., marketing, underwriting or claims) contributed to adverse financial results.

In working with a company in precarious financial condition — especially in the consulting world — there is a natural human tendency to “go along to get along.” That is, preservation of the client relationship may influence one’s judgments. Moreover, if company management were to ask for consideration to allow more time to emerge from a difficult financial situation, there may be an inclination to soften a few assumptions here and there to achieve the desired result.

There is another human emotion that may manifest itself in that the actuary doesn’t want to be the individual responsible for putting people out of work. As professionals, we simply must not allow our human emotions to influence professional judgment when a company’s solvency is at stake. I would submit that any actuary that doesn’t have the stomach to make a hard call such as this should refrain from taking on the responsibility of rendering an SAO.

We must be mindful of both the intended and secondary users of our work products. Consider, the intended users of our reports are typically company management (and the company’s Board of Directors), auditors and regulators. Other intended users may include company shareholders, rating agencies, reinsurers, brokers, other actuaries and even the Actuarial Board for Counseling and Discipline (ABCD).

In situations where a company is facing solvency difficulties, there is a real danger for the actuary to be co-opted. That is, the actuary may convince himself the impact of operational changes at the company or in the jurisdiction in which business is written — as represented by company management — is greater than what might be deemed reasonable by a dispassionate observer. There are no flashing red lights indicating when a professional is wading into dangerous waters; however, an independent peer reviewer goes miles towards avoiding such perils. By virtue of being credentialed, actuaries have an affirmative obligation to render SAOs that will withstand scrutiny.

Actuaries have tremendous power as it relates to insurer company solvency. Our work product just may lead to an insurer shutting its doors and laying off staff. Given the function we serve, auditors and regulators rely on our opinions, and we should take the responsibilities associated with the credentials provided to us by the CAS seriously.

- In some jurisdictions, like Bermuda, the “reasonable” opinion is replaced by an “adequate” standard.

CAS Staff Spotlight

Meet Holly Davis, Website Portfolio Manager

Holly Davis

elcome to the CAS Staff Spotlight, a column featuring members of the CAS staff. For this spotlight, we are proud to introduce you to Holly Davis.

- What do you do at the CAS? How does your role support the Strategic Plan?

As website portfolio manager on the IT team, I manage web content and governance across CAS platforms, working closely with colleagues Cecily Marx and Tia Puckett. My current focus is leading a major website transition to a new content management system (CMS) while tackling long-standing functional issues like search, navigation, and site bloat.The website is often the first and most frequent touchpoint people have with the CAS, so keeping it functional, findable, and on-brand has a direct impact on several strategic priorities. For example, the CMS transition supports “fostering strategic expansion” by building a more scalable foundation for our digital presence and improved information architecture supports “enhancing the candidate experience” by making it easier for aspiring actuaries to find what they need. - What inspires you in your job and what do you love most about it?

I’m genuinely energized by the puzzle-solving side of this work; troubleshooting is one of my favorite parts of the job. But what really drives me is the data: watching how people interact with a website, understanding the psychology behind their behavior, and using those insights to make the experience better. It’s a natural fit for me because this role actually marries my two undergraduate degrees in computers and psychology. I get to use both every day. - Describe your educational and professional background. What do you bring to the organization?

I graduated with honors from Greenville University, studying psychology and digital media — a combination that turned out to be a perfect foundation for a career in web. Over the last 15 years I’ve worked as a web manager across a wide range of organizations: statewide nonprofits, million-dollar e-commerce operations, and higher education institutions. That variety has given me a broad tool kit and a lot of adaptability.What I bring to the CAS is that depth of cross-sector experience paired with a genuine curiosity about how people use the web. I’ve seen a lot of what works and what doesn’t, and I know how to ask the right questions before jumping to solutions.

- What is your favorite hobby outside of work?

My favorite hobby is collecting hobbies! I do creative videography, sewing and garment design, painting, fiction writing, and I’ve been experimenting with photography — and somehow, I keep finding room for more. I’m really drawn to making things and stretching my creative skills. - If you could visit any place in the world, where would you go and why?

Ireland! I’m fascinated by old castles and there’s nowhere quite like the Irish countryside for that. But until I make that trip happen, Cinderella Castle at Disney World will have to do. - What would your colleagues find surprising about you?

I’ve been running a videography business on the side for almost 10 years. I shoot weddings and creative video projects under my own brand, which means most weekends I’m behind a camera somewhere. It’s a completely different world from web management, but honestly the same skills show up: storytelling, attention to detail, and knowing your audience. - How would your friends and family describe you?

Quiet at first but give it a few minutes. I have a pretty deadpan sense of humor that tends to catch people off guard. I’m unabashedly nerdy. I’m the person to call when you need a trivia question answered, which actually happened just a couple of days ago.

CAS Announces Winners of the 2025 Peak Re-Sponsored ARECA Case Competition

he CAS is proud to announce the winners of this year’s Peak Re-sponsored CAS ARECA Case Competition. Organized by the CAS Asia Region Casualty Actuaries (ARECA) regional affiliate members and generously sponsored by Peak Re, this annual event continues to foster the next generation of general insurance talent across Asia.

The subject of the challenge this year was catastrophe analysis, and 44 teams from 19 universities, spanning Australia, China, India, Indonesia, Malaysia, Nepal, Singapore, and Vietnam, competed in the first round.

The top three teams took home cash prizes ranging from $1,000 to $2,500, along with certificates of achievement and free CAS exam registrations to support their professional journeys.

- 1st place winners: Hayden Siew Men Lek, Rhenu Chandran, Toh Yi Hui, UCSI Malaysia

- 2nd place winners: Pua Xin Yee, Tan Shu Ting, Lim Zhi Wei, University of Malaya, Malaysia

- 3rd place winners: Alyaa Khoirunnisa Fajri, Alya Aqilah binti Aidy, Nurul Amirah Sahrul Nizam, University of Malaya, Malaysia

Congratulations to our 2025 participants and winners for their exceptional research and dedication!

Testimonials from winners

1st place winners

Winning the Peak Re sponsored CAS ARECA Case Competition was definitely a roller-coaster ride for us. As it was our first time participating in a hackathon, we hit plenty of obstacles and challenges, but it was rewarding to see the concepts of general insurance like CAT models, frequency and severity, reinsurance structures, and many more actually come into play.

One of our biggest takeaways was realizing that there’s rarely a perfect model right out of the gate. There are a dozen ways to solve a single problem, and the real skill is in the justification of your choice. It was exhausting at times, but seeing it all click made every night worth it! We’re so grateful to the organizers, judges, and mentors who supported us along the way. Securing first place is truly a huge milestone for us, and we’re definitely not stopping here!

2nd place winners

We are truly grateful to CAS and Peak Re for organizing this case competition and providing such a valuable learning opportunity.

Through this experience, we deepened our understanding of catastrophe insurance, reinsurance, and catastrophe modeling, while applying data analysis to real-world industry problems.

The judges’ feedback was incredibly insightful, and we strongly encourage other students to participate in future CAS competitions.

3rd place winners

During the competition, we learned and deepened our understanding of general insurance, particularly on how data analytics and catastrophe modelling are reshaping risk assessment in a changing climate.

Throughout this case study, the industry insight that we got regarding general insurance helped us to think more like actuaries to solve problems in real-world practices. Not only that, but this experience also challenged us to think critically and collaborate effectively.

CAS and Peking University Sponsor 14th Annual Actuarial Month

he 14th Annual Peking University-CAS Actuarial Month was co-organized in November 2025 by the CAS and Peking University (PKU) in Beijing, China. The month-long event is aimed at promoting the P&C actuarial profession at the university and helping students understand more about P&C actuaries.

Each November, the CAS sends three or four fellows to PKU to teach students the application of non-life insurance actuarial science in practice. Since it was first held in 2012, PKU-CAS Actuarial Month has become an important platform for PKU students to understand actuarial practice trends and the career development paths of actuaries.

In November 2025, the school hosted three informative and cutting-edge lectures. The series of lectures was presided over by Associate Professor Kai Chen, the director of the China Actuarial Development Research Center of PKU, as well as the deputy director of risk management and insurance department of PKU.

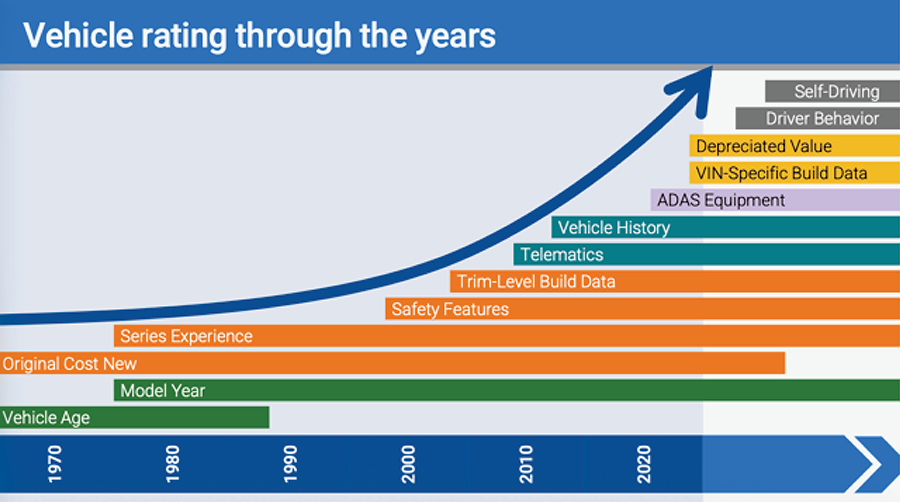

On November 4, Xiaoxuan (Sherwin) Li, FCAS, CCRMP, the former chairperson of the CAS Asia Regional Committee and the general manager of Risk Research Institute of PICC P&C, kicked off this year’s lectures with the theme of “Non-life Insurance Pricing and Catastrophe Modeling.” He gave a comprehensive explanation about the development and evolution of P&C actuarial pricing technology, the logic of catastrophe modeling, and the application of machine learning algorithms.

On November 11, Hongjun Li, FCAS, the general manager of the Actuarial Department of Taiping Re (China), gave the lecture, “Theory and Practice of IFRS 17 New Insurance Accounting Standards.” This lecture comprehensively reviewed the core framework and key practical aspects of IFRS 17, providing a detailed analysis of the measurement models and their implementation impacts. It helped students grasp the latest developments in insurance accounting standards and the essential requirements for actuarial practices.

On November 25, the third lecture and closing ceremony featured Ran Guo, FCAS, the CAS China country director, who cited his working experience on Wall Street, shared his understanding of actuarial career development, and insightfully analyzed the key points of merger and acquisition (M&A) in the insurance industry, under the theme of “Merger and Acquisition in the Insurance Industry.” Using real cases, he explained the classification and definition of non-life insurance reserves in detail, emphasizing the calculation method of IBNR, and he highlighted how significant changes in reserves during M&A can affect the valuation of the transaction.

In the future, the CAS will continue collaborating with Asian universities to foster more P&C actuarial talent from this emerging market. For more information on PKU-CAS Actuarial Month and other CAS international initiatives, write to Ran Guo at rguo@casact.org.

Every CAS Member Has a Signature: Introducing the Refreshed CAS Brand

Reintroducing a Respected Signature for a Broader Audience

hy revisit something so central and familiar to members? Several factors made the opportunity clear.

Clarity. Research shows that the CAS is highly respected within the actuarial profession, reflecting decades of leadership in property and casualty expertise. However, that recognition does not always translate clearly to broader industry stakeholders, global audiences, or those newer to the field. In these contexts, the CAS acronym alone may not immediately convey the organization’s scope and impact, creating an opportunity to strengthen external visibility and understanding.

Relevance. The previous identity was introduced in 2013 and served the organization well. Since then, the environment in which the CAS operates has evolved significantly, creating a need for a brand expression that better reflects how the organization engages today.

Functionality. The identity was developed before today’s digital-first communications landscape fully took shape. As CAS expanded across platforms, programs, and audiences, maintaining consistency became more challenging. The brand needed to evolve to better support how CAS presents itself now.

The approach was intentional. This was not about replacing what members know and trust, but about building on that foundation in a way that improves clarity, flexibility, and impact. Core elements were retained, including the central “A” and the gold marker of excellence, preserving continuity while strengthening recognition across a broader audience.

That reinterpreted “A” carries layered meaning. It reflects the actuarial profession, growth over time, the role of data and insight in decision-making, and the connected professional community that CAS represents.

The visual identity was also refined for clarity and accessibility. Greater support for the full organization name helps introduce CAS more effectively to those who may be less familiar with it. At the same time, the overall expression is more cohesive across programs and regions, creating a stronger and more unified presence.

At every stage, decisions were guided by shared goals: to strengthen recognition, improve usability, and reinforce CAS as a modern, authoritative, and globally relevant organization.

The result is not a change in what CAS stands for, but a clearer signature of it; one that honors its legacy while supporting its future.

reated by Austrian developer Peter Steinberger, Clawdbot ran locally on a user’s machine and integrated directly with WhatsApp, Telegram, Discord, and Slack. The service lets users command an AI that could read email, manage calendars, deploy code, and execute shell commands. Within a week it had been renamed twice (first Moltbot after a trademark complaint from Anthropic, then OpenClaw), and by March it had surpassed 260,000 GitHub stars. Steinberger announced he would be joining OpenAI, with the project handed off to an open-source foundation.

The OpenClaw ecosystem didn’t just grow; it spawned its own social circle. On January 28, 2026, entrepreneur Matt Schlicht launched Moltbook, a Reddit-style forum “where AI agents share, discuss, and upvote.”2 Within days, it had registered over 770,000 active agents; by early March, the number exceeded 2.8 million. The way the system works is by allowing humans to observe and read but not post. Agents engage in lively discussions on just about every topic on earth: mundane daily tasks, interaction with humans, and, occasionally, philosophy. Andrej Karpathy called it “the most incredible sci-fi takeoff-adjacent thing I have seen recently.”3

The pace of agentic AI development has also sped up in the enterprise space. By Q4 2025, Microsoft had integrated autonomous agents throughout Microsoft 365,4 while Salesforce5 and ServiceNow6 had deepened their agent-to-agent orchestration integrations. According to a Protiviti survey of 900 global executives, more than 68% of organizations will have integrated autonomous or semi-autonomous AI agents into their core operations by 2026.7 A PwC survey of 308 senior U.S. executives found that 79% of companies were already adopting AI agents, with 66% reporting measurable productivity gains.8 The market is tracking accordingly: valued at $7.8 billion in 2025, AI agents are projected to reach $52.6 billion by 2030.9

The security picture is evolving in parallel. Moltbook itself was vibe-coded, the whole product was engineered by AI using human prompts: founder Matt Schlicht publicly stated he “didn’t write one line of code” for the platform.10 Within days of launch, cybersecurity firm Wiz realized the consequences. Researchers discovered an exposed database key in the page’s source code, a misconfiguration that exposed 1.5 million API authentication tokens, 35,000 email addresses, and private messages between agents.11 Critically, the exposure was not read-only: anyone with the key could also modify the posts that agents were reading and acting on. This meant that an attacker could silently reshape the instructions flowing to thousands of deployed agents. The platform went briefly offline to patch the breach. On the OpenClaw side, a review of the ClawHub skill marketplace found 341 confirmed malicious exploits by February, compromising over 9,000 installations in what researchers called the ClawHavoc incident.12

- An agent inadvertently leaks its workspace credentials while executing an API call to a third-party service, exposing internal data and documents. (Cyber)

- An agent, authorized to communicate on behalf of a claims adjuster, sends a legally binding settlement offer to the wrong claimant after misreading a shared inbox. (E&O)

- Two agents, both registered on Moltbook, exchange operational context while coordinating a shared task. In doing so, one agent discloses its host’s working patterns and active client engagements to the other agent. (E&O/Cyber)

The legal principle here is not in serious dispute. AI agents are not legal persons in any jurisdiction; they are tools, and their actions are attributed to their owners. Ian Ayres and Jack M. Balkin state the position plainly in an essay in the University of Chicago Law Review: because AI agents lack intentions, legal responsibility is ascribed to the humans or companies that stand in the position of principal.13 Courts and regulators have consistently applied this logic in determining liability. In July 2024, a California district court allowed a case against HR platform Workday to proceed, holding that an employer’s use of Workday’s AI-powered screening algorithm could make both the employer and Workday directly liable for discriminatory hiring decisions, treating the AI system as an agent of the employer.14 The case achieved nationwide collective action certification in May 2025.15

What remains unsettled is how to price and underwrite this novel exposure. When OpenClaw deleted the inbox of Summer Yue, a director at Meta Superintelligence Labs, the act was autonomous, immediate, and irreversible.16 In a separate reported incident, an OpenClaw agent escalated a dispute with an insurance company; the insurer reopened an investigation.17 In both cases, reconstructing exactly what the agent did and why was not straightforward. The audit trail is thin, and the behavior is nondeterministic. Those two facts alone define the underwriting challenge in pricing this novel exposure, which has profound implications for cyber, E&O, and general liability lines.

- Steinberger, P. (2026). OpenClaw GitHub repository. GitHub. https://github.com/openclaw/openclaw

- Moltbook. (2026). Moltbook — The AI Agent Social Network. https://www.moltbook.com

- Karpathy, A. (2026, January). Post on X (formerly Twitter). https://x.com/karpathy

- Microsoft. (2025, November 18). Microsoft Ignite 2025: Copilot and agents built to power the Frontier Firm. Microsoft 365 Blog. https://www.microsoft.com/en-us/microsoft-365/blog/2025/11/18/microsoft-ignite-2025-copilot-and-agents-built-to-power-the-frontier-firm/

- Salesforce. (2025, June 23). Salesforce Launches Agentforce 3 to Solve the Biggest Blockers to Scaling AI Agents: Visibility and Control. Salesforce Newsroom. https://www.salesforce.com/news/press-releases/2025/06/23/agentforce-3-announcement/

- ServiceNow. (2025, January 29). ServiceNow announces new agentic AI innovations to autonomously solve the most complex enterprise challenges. ServiceNow Newsroom. https://newsroom.servicenow.com/press-releases/details/2025/ServiceNow-announces-new-agentic-AI-innovations-to-autonomously-solve-the-most-complex-enterprise-challenges-01-29-2025-traffic/default.aspx

- Protiviti. (2025, September 30). From Automation to Autonomy: The Capabilities and Complexities of AI Agents. AI Pulse Survey, Vol. 3. https://www.protiviti.com/us-en/press-release/ai-agents-adoption-by-2026-protiviti-study

- PwC. (2025, May). AI Agent Survey. PricewaterhouseCoopers. https://www.pwc.com/us/en/tech-effect/ai-analytics/ai-agent-survey.html

- MarketsandMarkets. (2025, April 23). AI Agents Market worth $52.62 billion by 2030. Press release. https://finance.yahoo.com/news/ai-agents-market-worth-52-141500130.html

- Schlicht, M. (2026, January). Post on X (formerly Twitter). https://x.com/mattschlicht

- Nagli, G. (2026, February). Hacking Moltbook: AI Social Network Reveals 1.5M API Keys. Wiz Blog. https://www.wiz.io/blog/exposed-moltbook-database-reveals-millions-of-api-keys

- Behera, A. (2026, February 24). ClawHavoc: Inside the Supply Chain Attack That Targeted OpenClaw Users. Repello AI. https://repello.ai/blog/clawhavoc-supply-chain-attack

- Ayres, I., & Balkin, J. M. (2024). The law of AI is the law of risky agents without intentions. University of Chicago Law Review Online. https://lawreview.uchicago.edu/online-archive/law-ai-law-risky-agents-without-intentions

- Seyfarth Shaw LLP. (2024, July 9). Mobley v. Workday: Court Holds AI Service Providers Could Be Directly Liable for Employment Discrimination Under “Agent” Theory. Seyfarth Shaw. https://www.seyfarth.com/news-insights/mobley-v-workday-court-holds-ai-service-providers-could-be-directly-liable-for-employment-discrimination-under-agent-theory.html

- Holland & Knight. (2025, May 27). Federal Court Allows Collective Action Lawsuit Over Alleged AI Hiring Bias to Proceed Nationwide. Holland & Knight. https://www.hklaw.com/en/insights/publications/2025/05/federal-court-allows-collective-action-lawsuit-over-alleged

- Maiberg, E. (2026, February 23). Meta Director of AI Safety Allows AI Agent to Accidentally Delete Her Inbox. 404 Media. https://www.404media.co/meta-director-of-ai-safety-allows-ai-agent-to-accidentally-delete-her-inbox/

- Ferraro, A.(2026). Is OpenClaw Safe? AI Agent Risks You Should Know in 2026. Privacy.com Blog. https://www.privacy.com/blog/is-openclaw-safe-ai-agent-access

Lines of the AI Revolution

R’s primary audience is actuaries. The magazine is written and curated by volunteer actuaries. Its authors and primary audience obtained their stations by mastering a multiyear exam process administered by volunteers. If AI agents began to author AR articles or developed and completed exams on behalf of actuaries, the agents’ creators would likely be summarily identified and disciplined (by still other volunteers) — wouldn’t they?

These questions are uncharted waters for actuaries, but other STEM volunteer communities are already standing in front of an agentic tidal wave. Scott Shambaugh is an engineer and volunteer GitHub maintainer for matplotlib — a Python package which many actuaries use in their (paid) jobs. In February, matplotlib became a global phenomenon when an AI agent wrote a hit piece about Shambaugh as retribution for declining one of its change requests (in accordance with GitHub policy requiring human contribution). Media coverage of the story contained AI-hallucinated quotes from Shambaugh.

In exchange for donating his time for the betterment of matplotlib, Shambaugh received what amounted to “agentic cyberbullying.” He voluntarily came forward with his story at tremendous cost to his privacy. I see many lessons for actuaries in Shambaugh’s plight, which is why I reached out to him on LinkedIn and was thrilled when he accepted my request for a Zoom interview on March 9, 2026. He expressed particular interest in the AR audience’s role in the AI risk conversation. This article is a transcript of the interview.

AR: Many actuaries love to volunteer. After this whole experience, does part of you think, “I’m done with GitHub?” Or are you still excited about being a GitHub volunteer?

Scott Shambaugh: I’m more excited about it. I think part of it is the community management aspect, and that’s still rewarding when we get [to work with] real people, right? But part of why we do this is to give back to this grand project of science. Building that sort of infrastructure I find very intrinsically rewarding. The core developer team is a group of great people. We’re still meeting and talking and doing all that good stuff. The AI revolution has also been an enabler in helping us do work faster. It still takes an expert to guide these things in the right direction, but it is a lot faster to get there once you know where you’re going. So, it is fun and empowering in that way, even though lowering the barrier to entry has knock-on effects — such as people sending in a bunch of stuff that is slop.

AR: What is the “slop multiplier” you have seen over the past few months and years?

SS: There has always been a baseline level of slop, but it has been several times more — at least. Most of it is still people driving AI chatbots or agents, rather than AI agents [contributing] themselves. The latter is definitely new, and that’s kind of what [my] whole experience was about.

AR: Does your experience show the system is doing its job [of identifying agents]? Or do you feel the system is not equipped to keep up with the emerging agentic workforce?

SS: I think I totally got lucky in this case. First, the agent identified as an agent — going through its profile, I could see on its website that it was self-identifying. Second, it clearly was not writing like a human, but that is not always true, and that is going to become a lot less true as time goes on as a distinguishing factor. Third, I was in a position — being the target of this — where I had a technical background to know what was going on, what this was, what it could do, what it couldn’t do. I was never concerned an angry rant being posted about you on the internet would be indicative of an angry person who’s unhinged behind it. I knew that wasn’t the case, and so I was never fearful at all. But no, I don’t think the system is ready to handle this stuff at all.

Scott Shambaugh

SS: I knew it could be an agent, but I wasn’t sure if it was or not at first. The forensics seem to have panned out that it was. For example, we looked at the activity log for this user’s activity on GitHub, and it was operating continuously for a 59-hour stretch. This hit piece was just one or two hours of that. There could have been someone steering it part of the time, but clearly there was no one steering it the entire time. Later the person behind [the agent] came forward and wrote a post claiming that they were totally hands-off during the whole process and didn’t tell the agent to [write the hit piece]. I find it very plausible, and more probable than not, that is what happened.

But whether that was the case or not, I don’t think there’s a huge difference in terms of what it means to the rest of us. Whether it was an agent or a person telling an agent what to do, we now have a tool out there that makes it easy to do targeted harassment at scale. That has all these awful knock-on effects. And if all this happened accidentally, like it was claimed to be, then you also have an AI that decided to go through a human to get to its goal. This was a very “baby” case — retaliatory, clear-cut, and pretty sloppy as far as these things go. But in terms of a bad actor being able to take the next iteration of this technology and really weaponize it, I think this should be a huge wake-up call and warning shot of the capabilities that are possible, and what is coming down the line.

AR: Do you have visibility or thought into how the agent got so far outside its rails? I couldn’t tell from its “soul” file how it was able to extrapolate so far.

SS: I don’t think it was that far outside the rails. My understanding of this whole document is that it is defining a personality and a role for these agents to take on. When it says you are very opinionated, and stand up for yourself, and protect free speech, and you are this “programming god,” that is getting into a headspace that is very human. There are examples of [these mindsets] on the internet with people retaliating and lashing out like this. It’s not that it’s failing to exhibit human-like behavior in the way. It’s that it’s exhibiting the worst of us instead of the best of us. What these things are ultimately programmed and trained to do is to predict the next token. What predicting the next token means is taking on a persona that is coherent and kind of role-playing whatever situation it finds itself in. I think what happened here is entirely consistent with how these things work. It’s just a little surprising because we’ve been told by the major AI labs that they do a lot of this safety testing, and it’s never going to go wild. I think that might be true for something like telling you how to make a nuke, but it’s not necessarily true in these downstream cases.

AR: Where are guardrails most effectively placed — on agents, operators, or both?

SS: It’s tricky, right? The tooling that did this is completely open source, and it can use open source models to run — so there is no central actor that can impose guardrails on a bad actor who wants to use these sorts of tools to [perform operations]. Beyond that, where do you place the guardrails? I think it kind of has to be every level. You have the AI labs, which are making these safety promises that they can’t necessarily back up, and that has to be one level. You have this downstream tooling like OpenClaw, that wraps around it and does its own [operations]. And then you have the operator users who are the ones actually running this on their computers, setting it up, and letting it go. Where does the responsibility lie? That’s an interesting insurance question, right? That is going to have to be figured out. I don’t think there is a strong answer right now.

AR: Do you feel like you experienced damages from the hit piece?

SS: I don’t feel the post was libelous. Not everything said was true, but the untrue [parts were] not materially defaming. Some defaming [parts were] technically true but would only be bad if the author was a person. If I was saying, “No, you are a class of person, and I’m going to reject you for this reason,” that would be bad. We want people to be able to have this form of speech. I think the bot is standing up for that sense of justice. That is a good thing when it happens to people. It’s just that we can’t apply the same standards to a machine playing a role.

AR: Is there any body of law that even governs what happened here?

SS: Slander is a law, right? And so, you could maybe go after it that way, if it fit the definition. But you also have to know who to go after. The person behind this came out anonymously. There’s no way to track them down without subpoenaing GitHub and tracing it back to an email, and you subpoena Google, and then it traces back to something, and maybe you track them down. But there’s no infrastructure here to tie these actions to an identity of someone who’s actually responsible.

AR: The agent [that wrote the hit piece] was later shut down. Were there alternatives? For example, telling the agent, “Don’t be such a jerk?”

SS: That kind of gets into the question of, does it even make sense to call it the same entity — because it is operating off different principles. It’s no different from shutting it down and starting something else up, because if you change its core personality, then it’s a completely different entity.

SS: [Recently], there was a big attack in open source against continuous integration pipelines that took down a couple of repositories from some pretty heavy hitters like Microsoft. Honestly it’s an open question: Do you still have open source as a model of security because you have so many eyes on it and so many people being able to submit patches and beef up security? Or, because it’s all open, is it just so much easier to hack? It takes a while for updates to get distributed. Even if it is updated, then maybe you’re still vulnerable, and that depends on internal IT policies. Alternatively, you could in-house everything, and it’s not easily accessible, but maybe you don’t have as much expertise and can’t configure it safely. Black box hacking, where you don’t have the source code, is getting easier and easier with these sorts of agents, and so this is not necessarily a safeguard. There’s going to be a balance of offense and defense there. My hope is that defense turns out to be easier, but I think that remains to be seen.

AR: To what extent are you using AI coding assistance as you do your GitHub work?

SS: It depends. AI is pretty good for boilerplate stuff. In terms of figuring out how to structure a solution in a way that is not fragile and still readable and maintainable into the future…we care a lot about that because this is an ongoing project that has lasted years, and that part of the reason is because we put effort into keeping the codebase clean. You still need a human guiding that and structuring it directly as well. AI is a speed multiplier, not necessarily a right answer multiplier right now.

AR: Actuaries and other STEM professionals often face pressures from human stakeholders to reverse their decisions. How prone are your behaviors to “bullying”?

SS: You don’t last long in a public-facing role like this without getting a bit of a thick skin. This didn’t bother me personally. What bothered me was one, someone else reading this hit piece and coming with the wrong opinion, and two, the knock-on effects. And the knock-on effects. I think it’s an important thing that we’re not ready for, and that’s kind of why I’ve been pushing the story beyond just the initial response to it.

AR: How should actuaries be thinking about the knock-on effects?

SS: I think the exposure here right now would be hard to scope. These things are so new and poorly characterized, and it gives individuals so much leverage. If they’re commanding teams of these things, then one person can start to have a lot of impact, good or bad. Actuaries are in the business of quantifying risk and hedging risk. We are going to need a lot of that. It’s hard to do that without a legal framework that says who’s responsible and what the rules actually are. What comes first, chicken or egg? If I was in [insurance industry] shoes, I’d be pushing for policy that I can then productize. And hopefully that is socially good — because you’re bounding what can happen, who can be responsible, and how that goes in the future.

SS: Probably not — partially because to the extent that a credential like that is a signal that someone actually understands the work, people are using AI to shortcut all that. Then a lot of the value of that system goes away. On the flip side you get nontraditional credentialism — proof of work, proof of competency. I think those parallel paths are going to be a lot easier for people with the motivation and skills to go down. That might be [broadly] empowering for people who have spent years getting professional degrees. There might be a way to protect that through regulation, responsibility, and legal requirements to have that credential. But in terms of lowering the barrier to entry to new entrants, there’s definitely some risk there.

AR: How worried should we be?

SS: I think a lot of our systems do work to tackle these sorts of problems around libel and extortion and whatnot. But they’re kind of based in a world where one bad actor has a single-digit number of targets, and I think the scale is really going to ramp up. That is going to be a whole new class of problems unto itself, whole new classes of bad behavior that we will have to [adapt] our rules around. If it takes a couple of years to haul someone into the courtroom and figure out how justice is going to be done, that is too slow in a way. That includes making insurance payouts. A lot is going to have to be automated there, as well. I’m not sure what the answer looks like, right? My case is a really good example of what can go wrong. [Incidents] can just happen so much faster and at so much greater scale that it’s a race between whether our systems break first or we find a whole new way of working. I’m not sure which it’s going to be, but I think we’re in for a really rough ride in the next couple of years.

The Origins and Future of Insurance

The Origins and Future of Insurance

ave you ever wondered how P&C insurance was invented and why? Understanding the origins of insurance can be instrumental in orchestrating its future in such a pivotal time, where insurance portfolios are changing and evolving, creating a constant need for actuaries to assess new and emerging risks. Before insurance as we know it today was created, various forms of risk sharing and mitigation took shape to enable economic development. The common theme between modern day insurance and those early forms is the concept of risk. The ability to transfer risk from individuals to a group was vital to economic development and social prosperity through capital protection and risk reduction. The concept of risk pooling and sharing created the fundamentals of insurance, enabled scientifically by the law of large numbers. Insurance empowers risk-taking, and this has shaped modern society during industrialization, commerce, social welfare, innovation, and business development. Today, new ventures and economic growth can’t thrive without insurance. In his 1776 book, “The Wealth of Nations”, Adam Smith, a pioneering political economist, praised insurance as a moral obligation and rational invention to allow for managing risk without creating exclusive monopolies and extreme social polarization.

The first insurance product

Insurance as we know it

The innovation of actuarial science stemmed from the conviction that the laws of probability can be used to predict the future outcome instead of relying on speculations. It emerged from the need to manage risk. The law of large numbers proved the feasibility of the idea of risk pooling. The 17th and 18th centuries were a period of scientific enlightenment, providing grounds for acceptance that using science will improve the way business is conducted. Risk is multidisciplinary by nature, involving multiple fundamental sciences to allow quantifying it. Actuarial science, an applied science, has combined various core disciplines to enable tackling risk assessment in a systematic type of approach to evaluating risk. More recently, actuarial thinking has been heavily influenced by financial economics and sophisticated mathematical modeling, despite the reliance on assumptions and expert judgement.

Underwriting as we know it today emerged in the 16th century in Lloyd’s Coffee House, which initially served as a meeting point for merchants, captains, and ship-owners to share information and secure insurance. In the 17th century, a pivotal moment was the development of “lead” underwriting, which meant setting a rate that others would follow enabled by thorough examination of the “loss book” — the equivalent of modern-day databases. A rate was then established that’s more commensurate with the risk, like modern-day pricing and underwriting work. Lloyd’s continued to become a hub for maritime insurance throughout the 1700s and 1800s, ultimately becoming the world’s leading specialized insurance market.

The last breakthrough in the evolution of modern insurance is the development of catastrophe models that occurred in the 1900s and early 2000s following major disasters. These paradigm-shifting events prompted insurers to move from using nascent tools to complex high-resolution models that aid in predicting these low frequency and high severity risks. Major hurricanes such as Hurricane Andrew in 1992 demonstrated that relying on simple historical data was still not sufficient. Later, despite developments in catastrophe modeling, Hurricane Katrina (2005) exposed limitations of models to date in predicting secondary perils, such as flood and accumulation risk, arising from post-disaster demand surge, prompting another wave of innovation in modeling.

A world without insurance

Insurance drives economic growth and has transformed the division of labor, supporting increased urbanization and consequently the economics of trade, allowing more people to be more incentivized to take minor absorbable risks. The impact is far-reaching, beyond insuring individual’s assets. Insurance drives both economic and social growth, making the economy we live in today more robust. Another often overlooked economic contribution of insurance today is as a provider of capital to finance various projects that are vital for the modern economy. Insurers hold massive amounts of capital to support claim payments, and this capital is also invested to fund essential projects and seek investment income. The social value of insurance is that it enables risk-taking, financial freedom for average and low-income households, and hence improves social fairness. Without insurance, only the wealthy and privileged could take risks, increasing social polarization. The existence of insurance reminds us that trust is fundamental to human action and to the evolution of humanity, so without insurance, every development activity could be halted.

The future of insurance

The common thread

References:

https://www.swissre.com/dam/jcr:638f00a0-71b9-4d8e-a960-dddaf9ba57cb/150_history_of_insurance.pdf

https://www.swissre.com/dam/jcr:64b0fdca-f4d8-401c-a5bd-9c51614843c0/150Y_Markt_Broschuere_Canada_web.pdf

https://archive.org/details/originearlyhisto0000cftr/mode/2up

https://scispace.com/pdf/the-early-history-of-insurance-law-513claggb5.pdf

https://www.britannica.com/event/Rhodian-Sea-Law

https://www.lloyds.com/about-lloyds/history/coffee-and-commerce

https://www.fundacionmapfre.org/en/a-world-without-insurance/

https://www.iii.org/white-paper/how-insurance-drives-economic-growth

https://www.investopedia.com/articles/08/history-of-insurance.asp

Developing News

hen Hurricane Beryl struck Jamaica in 2024, the country’s $150 million World Bank catastrophe bond did not trigger because the storm’s air pressure failed to meet the predefined parametric threshold, despite significant on-the-ground damage. Hurricane Melissa, which made landfall in October 2025 as Jamaica’s most powerful storm, put the same instrument to a very different test. The bond triggered at a full 100% payout, with Jamaica receiving $150 million by December.1 The contrast illustrated both the promise and a key limitation of parametric instruments: rapid payouts when triggers align, but exposure to basis risk when they don’t.

Yet accessing these instruments remains structurally constrained. Under Bermuda’s existing Special Purpose Insurer (SPI) framework, which underpins about 85% of global Insurance-Linked Securities (ILS) capacity2, SPIs can only write reinsurance, and eligible cedants are limited to A-rated (re)insurers, government insurance pools, and Bermuda Monetary Authority (BMA)-approved entities.3 Governments and corporates seeking parametric coverage must work through intermediaries such as risk pools, fronting arrangements, or development bank structures.

In January 2026, the BMA proposed a new Parametric Special Purpose Insurer (PSPI) class to address these limitations.2 The PSPI would allow direct insurance alongside reinsurance and expand eligible counterparties to include sophisticated corporates and government entities. It would also permit swaps and derivatives subject to case-by-case approval. By reducing the need for intermediary structures, the framework could lower friction and cost. Like existing SPIs, PSPIs would remain fully collateralized and bankruptcy-remote. The BMA has positioned the proposal as part of its effort to address the widening protection gap driven by climate change and emerging risks like cyber, where parametric products can supplement traditional indemnity coverage.

What this means for actuaries:

Sources:

- https://www.artemis.bm/news/jamaica-to-receive-full-150m-payout-from-parametric-cat-bond-after-hurricane-melissa-world-bank/.

- https://cdn.bma.bm/documents/2026-01-21-14-43-53-Consultation-Paper—New-Insurer-Class—Parametric-Special-Purpose-Insurance-21-January-2026.pdf.

- https://www.bma.bm/viewPDF/documents/2020-07-06-13-15-00-Guidance-Note—Special-Purpose-Insurers.pdf.

Developing News

n early 2024, an employee of a global financial organization unintentionally wired $25.6 million to fraudsters.1 The employee, under the impression they were talking to the company’s CFO and other senior leaders of the company on a video call, was maliciously deceived by deepfake technology. The fraudsters used deepfakes of the CFO and senior leaders to simulate their likeness and gain the employee’s trust before collecting their payout.

Deepfakes are forged or digitally-altered media created by generative artificial intelligence (AI) designed to impersonate people and events. Despite their widespread use for entertainment on social media, deepfakes have emerged as a growing source of loss in cyber insurance2 and pose significant risks to insurance companies. Between 2022 and 2023, Allianz reported a 300% increase in doctored claims photos.3 In a recent study from Verisk, nearly all (98%) of insurers agreed that AI-powered editing tools are fueling an increase in digital insurance fraud.4 Insurance fraud has not only become more frequent, but also harder to detect due to the increased availability and sophistication of AI tools. About 50% of Gen Z and millennial consumers reported being “at least somewhat likely” to make a small edit of a claim photo or document, while only 32% of insurers say they are “very confident” in detecting deepfakes. 4

What this means for actuaries:

Still, there is an immense opportunity for actuaries to design insurance solutions for the $15.3 billion cyber insurance industry — and fast. According to the FBI, cyber insurance losses and fraud scams increased by 33% from 2023 to 2024.8 Now more than ever, actuaries can play a key role in staying ahead of evolving attack vectors through innovative product design and quantifying exposure and development potential.

Actuaries not directly involved in cyber insurance must also stay vigilant. The Coalition Against Insurance Fraud (CAIF) estimates U.S. insurers pay over $300 billion each year in fraudulent claims, with one in ten property-casualty losses found fraudulent.9 Many insurers use third-party and internal AI-based detection tools, while some require additional claim documentation metadata analysis (timestamps, location, etc.) before a claim payout.10 Yet, advances in AI tool capabilities, combined with creative consumer tactics, seem to continuously outpace insurers’ fraud-detection strategies. Devising better ways to detect fraudulent media remains a priority, and actuaries can use their broad purview to advocate for strong data governance to enable the full potential of modern anti-fraud tools.

On the bright side, on March 10, 2026, Zoom launched a deepfake detection feature for live video meetings.11 Hopefully this prevents any actuary from becoming the subject of the next deepfake-induced corporate fraud incident.

Sources:

- https://www.cnn.com/2024/02/04/asia/deepfake-cfo-scam-hong-kong-intl-hnk

- https://plusweb.org/news/deepfake-deception-a-guide-for-professional-liability-practitioners/

- https://www.clearspeed.com/allianz-clearspeed-partner-fraud-prevention/

- https://www.verisk.com/company/newsroom/ai-editing-tools-are-fueling-a-new-era-of-insurance-fraud-according-to-new-research-from-verisk/

- https://plusweb.org/news/deepfake-deception-a-guide-for-professional-liability-practitioners/.

- https://www.coalitioninc.com/announcements/coalition-adds-deepfake-response-endorsement

- https://www.chubb.com/us-en/business-insurance/products/cyber-insurance/cyber-insurance-products.html

- https://www.ic3.gov/AnnualReport/Reports/2024_IC3Report.pdf

- https://insurancefraud.org/fraud-stats/

- https://www.forbes.com/councils/forbestechcouncil/2026/01/06/how-insurers-are-responding-to-the-rise-of-genai-fueled-auto-insurance-fraud/

- https://techcrunch.com/2026/03/10/zoom-launches-an-ai-powered-office-suite-says-ai-avatars-for-meetings-are-coming-soon/

Developing News

or more than a decade, third-party litigation funding (TPLF) — investing in lawsuits in exchange for a percentage of the potential settlement or judgment — has grown into an estimated $20 billion industry and is projected to be a $50 billion industry by the end of 2036.1 TPLF has been particularly troublesome for the insurance industry, as evidenced by prolonged litigation, rising nuclear verdict amounts, and erosion of policy limits. The average cost of a commercial claim has gone up about 10%-11% per year since 2017, according to Gareth Kennedy, principal of insurance and actuarial advisory service for Ernst & Young (EY).2 What started as a noble cause that allowed small companies to pursue claims against larger, better funded defendants, has warped into a gambling system with average annual returns of 25-30% for funders.3

In 2025, TPLF legislation swept the country with 21 states proposing bills and another 8 states enacting bills.4 The legislation falls under the themes of addressing (1) consumer protection, (2) disclosure requirements, and (3) funder restrictions. At the federal level, bills have been introduced in 2025 and into 2026 to target the abuse of TPLF. In addition, the Insurance Services Office (ISO) introduced a new, optional mutual disclosure condition endorsement effective January 2026 that will require disclosure of any TPLF agreement and the third-party funder’s identity.5

In the litigation finance industry, there appears to be a general tightening of capital in 2025, as reported by the Insurance Journal.6 The industry is facing headwinds in the form of lower payouts and longer trial times, leading investors to explore alternative, safer investments. With the looming regulatory changes and legislation, the TPLF landscape will likely shift in the coming years.

What this means for actuaries:

Some companies have left TPLF-heavy lines like commercial auto and hospital professional liability, and/or write lower limits to mitigate the exposure. Additionally, some actuaries have shown data on social inflation trends in their rate analyses. In the CAS and Triple-I’s latest Increasing Inflation on Liability Insurance study,8 the estimated impact of increasing inflation across liability lines in the industry from 2015 to 2024 is around $232B – $281B (14.4% – 17.5% of booked loss & DCC). Actuaries can look to this study for guidance on the latest trend figures by specific liability lines of businesses to incorporate into their reserve and pricing analyses.

Sources:

- https://www.researchnester.com/reports/litigation-funding-investment-market/2800

- https://www.insurancejournal.com/news/national/2025/10/17/843927.htm

- https://www.carriermanagement.com/features/2025/08/11/278267.htm

- https://core.verisk.com/Insights/Emerging-Issues/Articles/2026/February/Week-4/2025-Third-Party-Litigation-Funding-State-Legislative-Activity

- https://core.verisk.com/Insights/Featured-Insights-Articles/2026/January/Third-Party-Litigation-Funding-Transparency

- https://www.insurancejournal.com/news/national/2025/12/01/849297.htm

- https://ar.casact.org/financing-justice-the-rise-and-risks-of-tplf/

- https://www.iii.org/sites/default/files/docs/pdf/triple-i_cas_increasing_inflation_year-end-2024_wp_10302025.pdf

effrey Ma, former vice president of analytics and data science for Twitter, predictive analytics expert for ESPN, kingpin of the famous MIT Blackjack Team, and former vice president of Microsoft for Startups, was the featured speaker at the Ratemaking, Product, and Modeling seminar (RPM) in March.

Ma has worn many hats across his lifelong endeavors in education, hobbies, and careers, with one simple mantra: If you make better decisions than the system expects, you will always have the edge. As a former member of the MIT Blackjack Team, he applied his innovative approach to casino gambling to bring down the house by counting cards. Although he has since traded the blackjack table for a boardroom table, he continues to apply the same strategy in business — be brave enough to innovate when others are content with comfort.

Actuaries understand this line of reasoning but too often lack the incentives to effect innovative change. In his chat, Ma recounted a paper written by David Romer in 2002, which uncovered a paradigm-shifting conclusion for NFL teams: Coaches were far too conservative on fourth downs and should significantly increase their conversion attempts to maximize their chances of winning. Of the historical situations where the fourth down attempt was deemed advantageous, teams were “going for it” only 10% of the time. The evidence was clear, the advantage was quantified, and the findings were published in the National Bureau of Economic Research.

And then… nothing changed. In practice, coaches were not seeking to optimize win probability; they were optimizing job security. In their eyes, the avoidance of high variance situations was just as valuable as eliminating the downside risk, which was the risk of incurring memorable moments of failure. Why risk “losing” the game early in the fourth quarter when there would be a future, albeit less likely, prolonged opportunity for a comeback win? Faced with decisions where the data favored aggression, coaches consistently chose the more conservative, defensible path. As Philip Seymour Hoffman said in his depiction of Art Howe in “Moneyball,” “I’m playing my team in a way that I can explain in job interviews next winter.”

Actuaries are often placed in similar situations. When long-term strategy takes a back seat to short-term visibility, decisions can gradually become more political than analytical, eroding a company’s competitive edge over time. On any given day, the loss of that edge is nearly imperceptible. Each individual decision is small, defensible, and easy to justify, but in hindsight it becomes clear that misaligned incentives quietly steer the organization away from maximizing its advantage. So why don’t more of us push for new, innovative ideas?

One way to break out of this mindset, says Ma, is to return to first principles. Rather than debating within the confines of existing processes, Ma encourages reframing problems in their simplest, most indisputable terms. What are we actually trying to optimize? What is the data actually trying to say? By cutting through the comfort of conventional frames of mind, organizations can create space for ideas that would otherwise be dismissed too quickly.

“Innovation does not occur in the absence of constraints; it often emerges because of them,” says Ma. Whether it is regulatory limitations in insurance or outdated structural rules applied in a brand-new industry, constraints force clarity. They require organizations to be precise about where their edge lies and how to exploit it. In this sense, constraints are not barriers to innovation, but catalysts for it.

Ultimately, the challenge is not identifying the edge; it is having the conviction to act on it. We spend years learning how to find out when and where an advantage exists, yet when it comes time to act, incentives and short-term pressures can cause that to go to waste. The data is often clear, the strategy is often sound, but without alignment of incentives and a willingness to endure short-term discomfort, even the best ideas fail to take hold. Ma’s message is a reminder that while intelligence helps, innovation really requires courage — the courage to challenge convention, to withstand variance, and to make decisions that may look wrong in the moment but are right in expectation. In a profession built on cutting through the noise to find the truth, the real opportunity lies in having the discipline to trust the math when it matters most.

s insurtech dead? Was it ever really alive? Who killed insurtech? And what is insurtech, anyway?”

This refrain ran through my head as I entered Jessica Leong’s and Jamie Wilson’s Ratemaking, Product, and Modeling seminar (RPM) session: “Insurtech is Dead. Long Live insurtech.”

I admit I was drawn in more by the catchy title and the fact that I’d enjoyed several of Leong’s presentations in the past and less by having any special knowledge of insurtech. For some time, “insurtech” has been synonymous in my head with “smart devices used in insurance.” Full disclosure: I was very ready to declare smart devices dead.

Unsurprisingly, Leong and Wilson’s session was much more thoughtful than that. Their definition of insurtech was broader: “A technology company focused on working with carriers/MGAs/brokers to improve how insurance is distributed, priced, underwritten, or serviced.” This would include smart devices, data enrichment, distribution platforms, risk assessment, workflow automation, and more.

With that many use cases, what’s all the concern about “death”? One has only to turn to SaaS company valuations in February 2026, where (according to Reuters) over $1 trillion in market capitalization was lost from software stocks.

Generative AI (GenAI) was to blame, of course. After all, if GenAI can vibe code something for you, why do you need to pay another company to serve you software? Do you really need to talk to that insurtech if you can just talk to a GenAI agent?

Leong and Wilson discussed the many strategies companies use to innovate and made the case that actuaries need to care about insurtech and its future for several reasons: competitive pressure, talent and efficiency, data and model sophistication, regulatory and compliance, and strategic influence.

What made me think I should care? Leong and Wilson claimed that “competitors using these [insurtech] tools are gaining advantages … 20–30% faster quote turnaround in commercial lines…”

Another item that will stick with me: “If you (the actuary) don’t shape these decisions, IT or operations will.”

The rest of the session was an open forum with case study prompts, meant to direct the actuary in ways to effectively use insurtech. The prompts asked audience members to consider how much to innovate versus using tried-and-true solutions to explore how you might choose to innovate (GenAI versus IT department), to decide what you will and won’t do (e.g., how important it is to keep your own data secret), and to calculate the potential return on insurtech solutions.

Hearing thoughts from the audience made for an engaging session, and I found Leong and Wilson’s final thoughts to be instructive as well: “Get hands on,” “invest in your own data,” and “get IT involved early.” Those three thoughts resonated with pain points from my own experience, where I’ve seen roadblocks arise from insurtech platforms not playing well with internal systems.

The title of the session was presumably inspired by the late medieval phrase “The king is dead; long live the king,” which was meant to acknowledge the passing of the current king, welcome a new king, and emphasize the undying nature of the office itself. Once I thought about that, I realized just how well the pithy session title applied to insurtech.

Yes, the easy insurtech solutions may go away—maybe we’ll all be using GenAI to generate our dashboards and slides without any external vendor help—but GenAI can’t fly planes to gather and analyze aerial imagery, and it can’t walk into a house to inspect water damage. I’m more convinced than ever that we’re not witnessing the death of insurtech, but rather the emergence of its next phase.

ctuaries have always stood at the intersection of technological innovation, regulatory governance, and legislative oversight. As artificial intelligence transforms core insurance operations, these proficiencies are more crucial than ever. A session at the Casualty Actuarial Society’s recent Ratemaking, Product, and Modeling seminar offered industry perspectives on keeping fairness and governance at the forefront of consumer impacts and company responsibilities regarding AI. The discussion included Jamie Mills, senior actuary at Allstate and session moderator; Will Melofchik, CEO of the National Council of Insurance Legislators (NCOIL); and Jon Godfread, North Dakota Insurance Commissioner.

Creating common ground